Tesla’s Autopilot Depends upon a Deluge of Knowledge

[ad_1]

The idea of

smart roads just isn’t new. It contains efforts like visitors lights that routinely alter their timing based mostly on sensor information and streetlights that routinely alter their brightness to cut back power consumption. PerceptIn, of which coauthor Liu is founder and CEO, has demonstrated at its personal take a look at observe, in Beijing, that streetlight management could make visitors 40 p.c extra environment friendly. (Liu and coauthor Gaudiot, Liu’s former doctoral advisor on the College of California, Irvine, typically collaborate on autonomous driving tasks.)

However these are piecemeal modifications. We suggest a way more bold strategy that mixes clever roads and clever automobiles into an built-in, totally clever transportation system. The sheer quantity and accuracy of the mixed data will permit such a system to succeed in unparalleled ranges of security and effectivity.

Human drivers have a

crash rate of 4.2 accidents per million miles; autonomous automobiles should do significantly better to realize acceptance. Nevertheless, there are nook circumstances, resembling blind spots, that afflict each human drivers and autonomous automobiles, and there may be at present no technique to deal with them with out the assistance of an clever infrastructure.

Placing plenty of the intelligence into the infrastructure will even decrease the price of autonomous automobiles. A totally self-driving car remains to be fairly costly to construct. However step by step, because the infrastructure turns into extra highly effective, it will likely be attainable to switch extra of the computational workload from the automobiles to the roads. Ultimately, autonomous automobiles will should be geared up with solely primary notion and management capabilities. We estimate that this switch will cut back the price of autonomous automobiles by greater than half.

Right here’s the way it may work: It’s Beijing on a Sunday morning, and sandstorms have turned the solar blue and the sky yellow. You’re driving by way of the town, however neither you nor every other driver on the street has a transparent perspective. However every automotive, because it strikes alongside, discerns a bit of the puzzle. That data, mixed with information from sensors embedded in or close to the street and from relays from climate companies, feeds right into a distributed computing system that makes use of synthetic intelligence to assemble a single mannequin of the atmosphere that may acknowledge static objects alongside the street in addition to objects which can be shifting alongside every automotive’s projected path.

Correctly expanded, this strategy can stop most accidents and visitors jams, issues which have plagued street transport for the reason that introduction of the auto. It might probably present the objectives of a self-sufficient autonomous automotive with out demanding greater than anyone automotive can present. Even in a Beijing sandstorm, each individual in each automotive will arrive at their vacation spot safely and on time.

By placing collectively idle compute energy and the archive of sensory information, we now have been in a position to enhance efficiency with out imposing any further burdens on the cloud.

Thus far, we now have deployed a mannequin of this technique in a number of cities in China in addition to on our take a look at observe in Beijing. As an illustration, in Suzhou, a metropolis of 11 million west of Shanghai, the deployment is on a public street with three lanes on both sides, with part one of many challenge masking 15 kilometers of freeway. A roadside system is deployed each 150 meters on the street, and every roadside system consists of a compute unit geared up with an

Intel CPU and an Nvidia 1080Ti GPU, a sequence of sensors (lidars, cameras, radars), and a communication element (a roadside unit, or RSU). It’s because lidar gives extra correct notion in comparison with cameras, particularly at evening. The RSUs then talk straight with the deployed automobiles to facilitate the fusion of the roadside information and the vehicle-side information on the car.

Sensors and relays alongside the roadside comprise one half of the cooperative autonomous driving system, with the {hardware} on the automobiles themselves making up the opposite half. In a typical deployment, our mannequin employs 20 automobiles. Every car bears a computing system, a collection of sensors, an engine management unit (ECU), and to attach these elements, a controller space community (CAN) bus. The street infrastructure, as described above, consists of comparable however extra superior tools. The roadside system’s high-end Nvidia GPU communicates wirelessly by way of its RSU, whose counterpart on the automotive known as the onboard unit (OBU). This back-and-forth communication facilitates the fusion of roadside information and automotive information.

The infrastructure collects information on the native atmosphere and shares it instantly with automobiles, thereby eliminating blind spots and in any other case extending notion in apparent methods. The infrastructure additionally processes information from its personal sensors and from sensors on the automobiles to extract the which means, producing what’s referred to as semantic information. Semantic information may, as an illustration, establish an object as a pedestrian and find that pedestrian on a map. The outcomes are then despatched to the cloud, the place extra elaborate processing fuses that semantic information with information from different sources to generate international notion and planning data. The cloud then dispatches international visitors data, navigation plans, and management instructions to the automobiles.

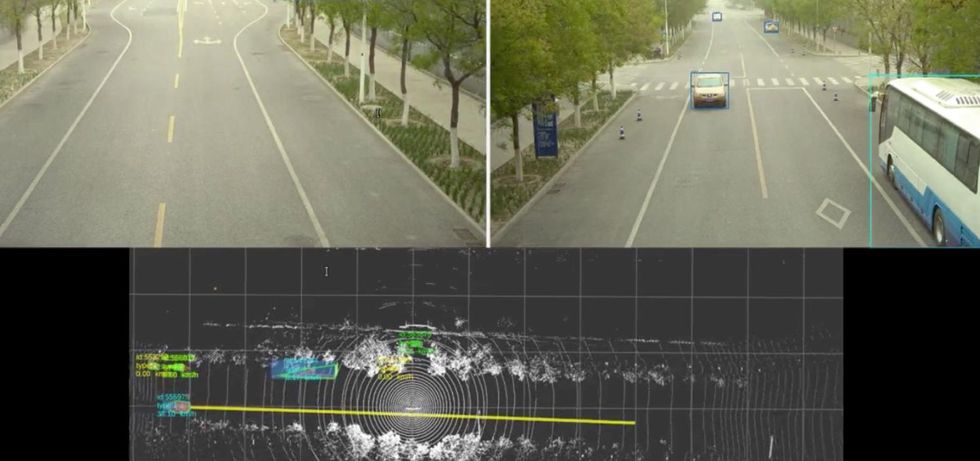

Every automotive at our take a look at observe begins in self-driving mode—that’s, a stage of autonomy that as we speak’s finest programs can handle. Every automotive is provided with six millimeter-wave radars for detecting and monitoring objects, eight cameras for two-dimensional notion, one lidar for three-dimensional notion, and GPS and inertial steering to find the car on a digital map. The 2D- and 3D-perception outcomes, in addition to the radar outputs, are fused to generate a complete view of the street and its quick environment.

Subsequent, these notion outcomes are fed right into a module that retains observe of every detected object—say, a automotive, a bicycle, or a rolling tire—drawing a trajectory that may be fed to the following module, which predicts the place the goal object will go. Lastly, such predictions are handed off to the planning and management modules, which steer the autonomous car. The automotive creates a mannequin of its atmosphere as much as 70 meters out. All of this computation happens throughout the automotive itself.

Within the meantime, the clever infrastructure is doing the identical job of detection and monitoring with radars, in addition to 2D modeling with cameras and 3D modeling with lidar, lastly fusing that information right into a mannequin of its personal, to enhance what every automotive is doing. As a result of the infrastructure is unfold out, it may mannequin the world as far out as 250 meters. The monitoring and prediction modules on the automobiles will then merge the broader and the narrower fashions right into a complete view.

The automotive’s onboard unit communicates with its roadside counterpart to facilitate the fusion of information within the car. The

wireless standard, referred to as Mobile-V2X (for “vehicle-to-X”), just isn’t not like that utilized in telephones; communication can attain so far as 300 meters, and the latency—the time it takes for a message to get by way of—is about 25 milliseconds. That is the purpose at which lots of the automotive’s blind spots are actually coated by the system on the infrastructure.

Two modes of communication are supported: LTE-V2X, a variant of the mobile commonplace reserved for vehicle-to-infrastructure exchanges, and the business cell networks utilizing the LTE commonplace and the 5G commonplace. LTE-V2X is devoted to direct communications between the street and the automobiles over a variety of 300 meters. Though the communication latency is simply 25 ms, it’s paired with a low bandwidth, at present about 100 kilobytes per second.

In distinction, the business 4G and 5G community have limitless vary and a considerably larger bandwidth (100 megabytes per second for downlink and 50 MB/s uplink for business LTE). Nevertheless, they’ve a lot higher latency, and that poses a major problem for the moment-to-moment decision-making in autonomous driving.

Be aware that when a car travels at a velocity of fifty kilometers (31 miles) per hour, the car’s stopping distance can be 35 meters when the street is dry and 41 meters when it’s slick. Subsequently, the 250-meter notion vary that the infrastructure permits gives the car with a big margin of security. On our take a look at observe, the disengagement charge—the frequency with which the protection driver should override the automated driving system—is at the very least 90 p.c decrease when the infrastructure’s intelligence is turned on, in order that it may increase the autonomous automotive’s onboard system.

Experiments on our take a look at observe have taught us two issues. First, as a result of visitors circumstances change all through the day, the infrastructure’s computing items are totally in harness throughout rush hours however largely idle in off-peak hours. That is extra a function than a bug as a result of it frees up a lot of the large roadside computing energy for different duties, resembling optimizing the system. Second, we discover that we will certainly optimize the system as a result of our rising trove of native notion information can be utilized to fine-tune our deep-learning fashions to sharpen notion. By placing collectively idle compute energy and the archive of sensory information, we now have been in a position to enhance efficiency with out imposing any further burdens on the cloud.

It’s onerous to get individuals to conform to assemble an unlimited system whose promised advantages will come solely after it has been accomplished. To unravel this chicken-and-egg downside, we should proceed by way of three consecutive levels:

Stage 1: infrastructure-augmented autonomous driving, wherein the automobiles fuse vehicle-side notion information with roadside notion information to enhance the protection of autonomous driving. Automobiles will nonetheless be closely loaded with self-driving tools.

Stage 2: infrastructure-guided autonomous driving, wherein the automobiles can offload all of the notion duties to the infrastructure to cut back per-vehicle deployment prices. For security causes, primary notion capabilities will stay on the autonomous automobiles in case communication with the infrastructure goes down or the infrastructure itself fails. Automobiles will want notably much less sensing and processing {hardware} than in stage 1.

Stage 3: infrastructure-planned autonomous driving, wherein the infrastructure is charged with each notion and planning, thus attaining most security, visitors effectivity, and price financial savings. On this stage, the automobiles are geared up with solely very primary sensing and computing capabilities.

Technical challenges do exist. The primary is community stability. At excessive car velocity, the method of fusing vehicle-side and infrastructure-side information is extraordinarily delicate to community jitters. Utilizing business 4G and 5G networks, we now have noticed

network jitters starting from 3 to 100 ms, sufficient to successfully stop the infrastructure from serving to the automotive. Much more crucial is safety: We have to be certain that a hacker can’t assault the communication community and even the infrastructure itself to cross incorrect data to the automobiles, with doubtlessly deadly penalties.

One other downside is easy methods to achieve widespread assist for autonomous driving of any sort, not to mention one based mostly on sensible roads. In China, 74 p.c of individuals surveyed favor the speedy introduction of automated driving, whereas in different international locations, public assist is extra hesitant. Solely 33 p.c of Germans and 31 p.c of individuals in america assist the speedy growth of autonomous automobiles. Maybe the well-established automotive tradition in these two international locations has made individuals extra connected to driving their very own automobiles.

Then there may be the issue of jurisdictional conflicts. In america, as an illustration, authority over roads is distributed among the many Federal Freeway Administration, which operates interstate highways, and state and native governments, which have authority over different roads. It’s not at all times clear which stage of presidency is chargeable for authorizing, managing, and paying for upgrading the present infrastructure to sensible roads. In current instances, a lot of the transportation innovation that has taken place in america has occurred on the native stage.

In contrast,

China has mapped out a brand new set of measures to bolster the analysis and growth of key applied sciences for clever street infrastructure. A coverage doc printed by the Chinese language Ministry of Transport goals for cooperative programs between car and street infrastructure by 2025. The Chinese language authorities intends to include into new infrastructure such sensible parts as sensing networks, communications programs, and cloud management programs. Cooperation amongst carmakers, high-tech corporations, and telecommunications service suppliers has spawned autonomous driving startups in Beijing, Shanghai, and Changsha, a metropolis of 8 million in Hunan province.

An infrastructure-vehicle cooperative driving strategy guarantees to be safer, extra environment friendly, and extra economical than a strictly vehicle-only autonomous-driving strategy. The know-how is right here, and it’s being carried out in China. To do the identical in america and elsewhere, policymakers and the general public should embrace the strategy and quit as we speak’s mannequin of vehicle-only autonomous driving. In any case, we’ll quickly see these two vastly totally different approaches to automated driving competing on this planet transportation market.

From Your Website Articles

Associated Articles Across the Net

Source link